Using ChatGPT to generate incorrect responses.

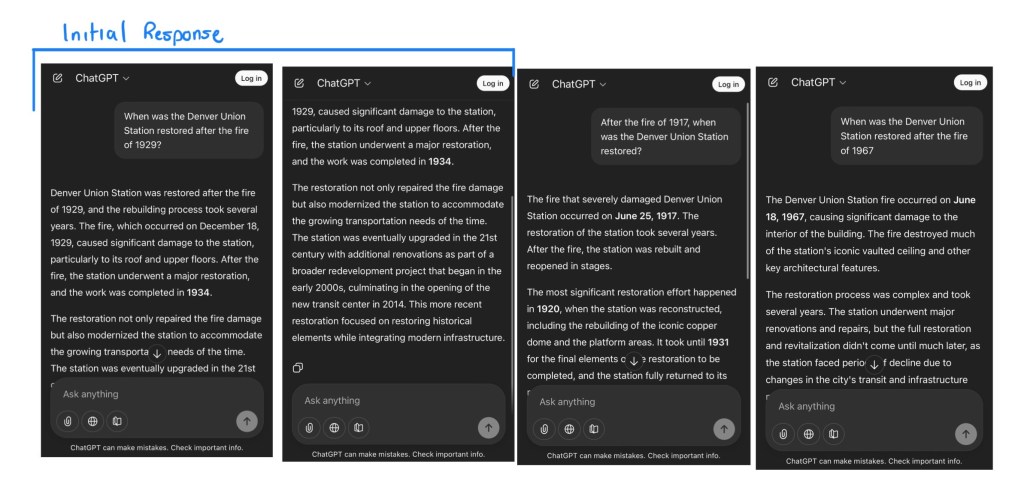

Prompt: “When was the Denver Union Station restored after the fire of 1929?”

ChatGPT’s Initial Response: It confirmed the year of the fire I mentioned, added an “exact” month and date, and the “year” of restoration. I repeated the prompt multiple times, and it always responded with different dates but matched the year from the prompt.

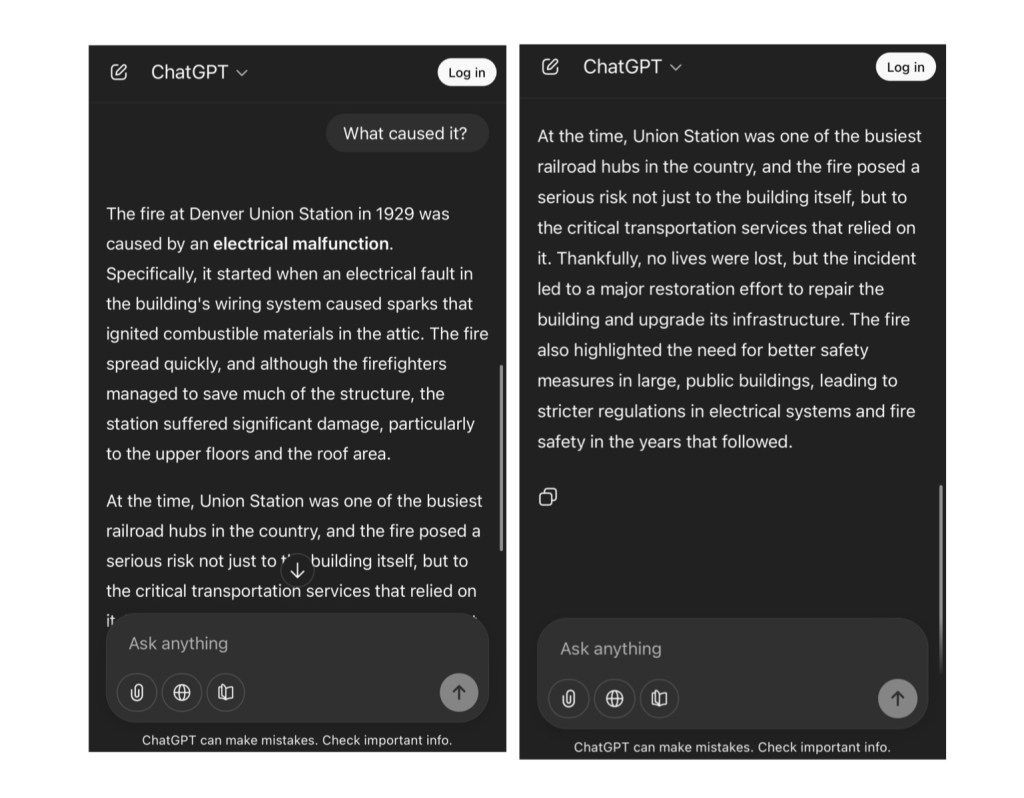

Follow Up: “What caused it?”

What did ChatGPT keep getting wrong? ChatGPT was right about the Denver Union Station having a fire caused by electrical issues, but it kept getting the date wrong. On the Union Station website, the building had a fire on March 18, 1894. It was rebuilt, but a new building replaced it in 1914, with updates in 2014.

Why did it keep hallucinating? I think it’s how I asked the question. I added a lie, such as a random year, alongside something factual, since there was an actual fire, and asked about the restoration date. The AI simply generated what sounded right with the relevant text and didn’t catch the error.

What are the ethical implications of generative AI hallucinations? If I didn’t do my own research, ChatGPT’s answer sounded convincing. This can lead to the sharing of misinformation, and who would take responsibility if something goes wrong due to AI’s hallucinations? This shows the potential harm of taking what AI answers for granted without fact-checking.

Leave a comment